Klaus Finke’s Commodore 64/128 GEOS Disk Collection

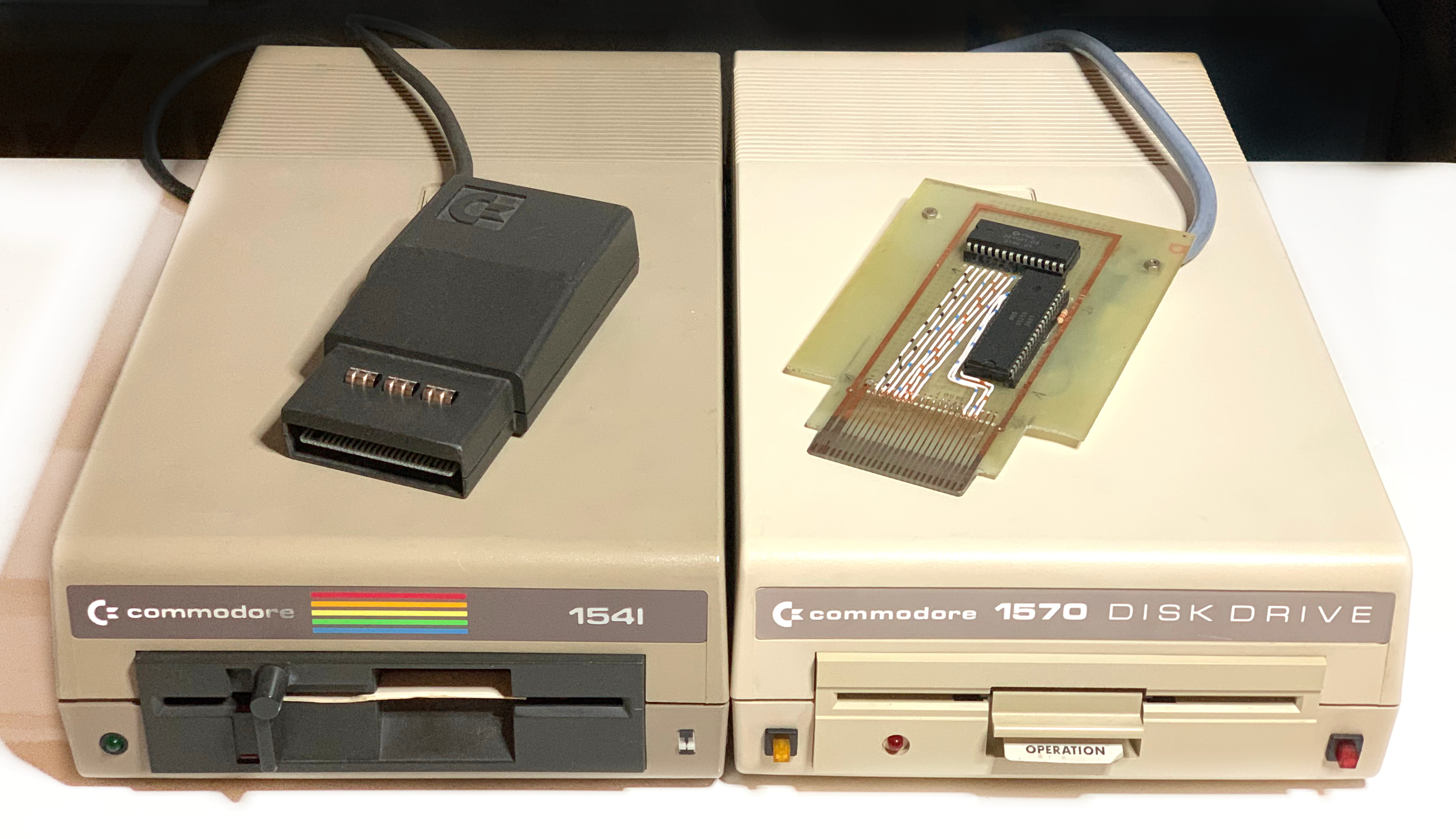

This is a collection of 111 Commodore 64/128 disk images from a box of CMD FD-2000 high-density floppy disks. The disks came from the estate of Klaus Finke (1945–2016), a GEOS community organizer from Suhl, Thuringia.